I’ve spent many hours attempting to install numpy and scipy from source on both Linux at work and Mac OS X at home. I could never get the big speedups possible from good numerical libraries like BLAS and ATLAS. Enthought’s one-click install python distribution turned out to be the perfect solution.

Jan 22

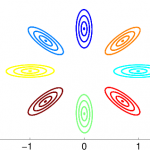

Why probability contours for the multivariate Gaussian are elliptical

Every 2D Gaussian concentrates its mass at a particular point (a “bump”), with mass falling off steadily away from its peak. If we plot regions that have the *same* height on the bump (the same density under the PDF), it turns out they have a particular form: an ellipse. In this post, I’ll use math to show why it is an ellipse.

Nov 13

Practical Bayesian Optimization with Spearmint

Recently, I’ve become interested in using Gaussian Processes for hyperparameter optimization. Coincidentally, there’s a great NIPS 2012 paper by Jasper Snoek, Hugo Larochelle, and Ryan Adams about that very topic. Thankfully, not only is the paper insightful, but they also have Python source code available, called “spearmint” (I guess they chose the name so that it sounds like “experiment”). This post documents my experience using this code on a simple toy example. This is mostly about the nuts and bolts of using the code, but I definitely recommend reading the paper.

Sep 30

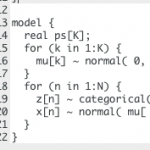

Review of STAN: off-the-shelf Hamiltonian MCMC

Recently, some folks at Andrew Gelman’s research lab have released a new and exciting inference package called STAN. STAN is designed to do MCMC inference “off-the-shelf”, given just observed data and a BUGS-like definition of the probabilistic model. I’ve played around with STAN in some detail, and my high-level review is summarized here

Good:

- Installation is involved but straight-forward and quite well-documented

- STAN has an active and enthusiast developer team. Ask a question on the Google group, and you’ll often get a same day (or even same hour) response.

- STAN does Hamiltonian MCMC via automatic differentiation and principled automatic tuning, so you (1) don’t need to compute any gradients analytically, and (2) don’t need to set any parameters manually!

- STAN is also wicked fast on some toy examples it was designed for.

Caveats:

- STAN (version 1.0) does not support *any* sampling of discrete variables. You’ll need to invest human time in marginalizing out discrete variables, esp. for common machine learning approaches like mixture models.

- Vectorizing the model definition can work wonders, but requires some detailed human knowledge of STAN’s inner guts.

- The type system is a bit confusing. For example, to enforce that a variable must be :

- This is legal:

real<lower=1> x; - This isn’t:

vector<lower=1> xvec;

- This is legal:

- Some language features I expected were mysteriously lacking.

- cannot slice-index variables (e.g. MyMatrix[ 1:5,:] )

- cannot transpose matrices

Punchline: I don’t think STAN is quite ready to be used by Machine Learning researchers as a black-box tool to prototype models. It lacks fine control over when to do discrete vs. continuous updates, and thus scales poorly to moderately sized dataset (it took *minutes* to run 5 iterations on an LDA topic model with just 100 documents and 7000 words). However, I do believe that the automatic approach to Hamiltonian MCMC is sensible, and hopefully down the road this package might be more viable.

Read on to see detailed comments and code examples.

Sep 01

Using Voodoo to Estimate Handheld Camera Motion

I’m currently investigating fully automatic methods to determine the motion of a handheld cameras in unconstrained environments. Basically, if action recognition is going to work well in unconstrained videos, we need video representations that distinguish between motions that are “signal” (moving actors, moving objects) and motions that are irrelevant noise due to camera motion (panning, zooming, shake, etc.).

Today, I checked out one out-of-the-box solution: an open-source tool called Voodoo. Voodoo seems originally designed with graphics and special effects industries in mind, but . I found it quite easy to install and get up and running. Unfortunately, I found its performance on my test suite of two hand-held camera sequences to be disastrous. Perhaps I’m asking more than it was designed for. Read on for more…

Aug 30

Dense Trajectories for Action Recognition

Here, I take a qualitative look at one of the most successful video representations in use today: dense trajectories. You can find the original CVPR 2011 paper by Wang, Klaser, Schmid, and Liu here: Action Recognition by Dense Trajectories.

I’m mostly interested in evaluating how well the provided code does at finding good trajectories for videos from the Olympic Sports dataset, which have lots of camera motion, both deliberate (pan, zoom, etc) and accidental (shake). The example videos I show here show that this approach is really vulnerable to this noise, which probably requires further preprocessing to remove errant trajectories that aren’t firing on the motion of interest. Their “Motion Boundaries” descriptor might help distinguish camera motion from “signal”, but I doubt it will solve the entire problem.

Aug 18

Elliptical Slice Sampling for priors with non-zero mean

I’ve been playing around with elliptical slice sampling (ESS) lately. This is a new MCMC technique developed by Iain Murray, Ryan Adams, and David MacKay. Here is the original AISTATS paper. The punchline is that this method allows exact joint sampling for vectors whose posterior distribution can be expressed using any Gaussian prior and any (non-conjugate) likelihood. ESS does not have any free parameters, and always accepts its proposal. This makes it favorable compared to, say, Metropolis-Hastings methods, since tuning the lengthscales that control a random walk to achieve good mixing rates requires expensive human time.

One tricky aspect of this method is that it requires a change of variables when applied to sample a variable with non-zero prior mean. This is mentioned in the original paper, but not all that coherently. I thought this deserved a blog post, so that practitioners make sure they get it right. The necessary steps are quite simple for experienced statisticians, so there’s nothing really novel or exciting happening. Read on for more. Hopefully in the near future I’ll report on experiments comparing ESS to other methods on some more interesting models.

Aug 14

ICML 2012 Highlights

After attending ICML 2012 in Edinburgh a month ago, I suppose a summary of my favorite papers and presentations is long overdue.

Overall, Edinburgh provided a beautiful setting for the conference, and famously dreary Scotland weather even cooperated with a few days of sunshine.

Overall, Edinburgh provided a beautiful setting for the conference, and famously dreary Scotland weather even cooperated with a few days of sunshine.

Jul 12

CVPR 2012 Highlights

In early June, I attended CVPR, a premier vision conference. Luckily for me, the conference was right here in Providence just 20 minutes from Brown, so I didn’t have far to go.

Definitely, one highlight was the lobster dinner, served to all 1000+ attendees. After the jump, I’ll summarize some of my favorite research highlights.

Jul 12

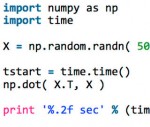

Blog Basics

This sample post shows how to this site can include typeset mathematics and short code snippets.

Typesetting is possible via the QuickLaTeX plugin. Code via the SyntaxHighlighter Evolved plugin.

Recent Comments